Why We Left Microsoft and Rebuilt Our Operations on Google Workspace, Slack, and Salesforce and What We Learned

- Kumar Kritanshu

- 2 days ago

- 8 min read

The Shift to AI-Native Operations

How Truffle's migration from Microsoft to Google Workspace, Slack, and Salesforce became the foundation for an entirely new way of running enterprise operations.

Enterprise AI has reached an inflection point, and the decisions organisations make about their operational infrastructure in 2026 will define their competitive position for the decade that follows. The organizations pulling ahead are not the ones with the most sophisticated models or the largest technology budgets — they are the ones that have fundamentally rethought how intelligence moves through their operations. That is a structural question, and it demands a structural answer.

Most enterprises treated AI as an addition to existing workflows.

The result was predictable: capable technology sitting on top of fragmented systems, siloed decisions, and teams operating on different versions of the same data.

The returns stayed flat because the underlying operating model stayed unchanged. AI amplifies how a business runs. When the foundation is weak, amplification makes the weakness more visible, not less consequential.

The question that now defines enterprise competitiveness is precise: where does intelligence live inside the organization, and how fast can it act when it matters. CIOs and RevOps leaders who answer that question clearly are building compounding advantage. Those who treat it as a technology procurement decision are accumulating operational debt that compounds just as fast.

Truffle's response to that question was a category of decision most organizations avoid not an upgrade, but a redesign of how work actually happens.

Truffle has transitioned from the Microsoft stack (Teams + Microsoft AI ecosystem) to Google Workspace + Slack, anchored by Salesforce.

This is more than a tooling preference; it is a directional bet on how AI-native organizations will operate over the next decade.

The Why: A Strategic Shift, Not a Tool Swap

The decision to move away from Microsoft's ecosystem was grounded in one observable reality: the pace of AI development inside Microsoft's productivity stack had fallen behind in ways that directly affected how work gets done.

Copilot, while capable, remained a layer on top of existing tools rather than an intelligence system woven into them. Bing-powered search, the backbone of Microsoft's AI reasoning layer, had lost meaningful ground to Google's Gemini in contextual accuracy, cross-document reasoning, and the speed at which new capabilities were reaching users.

For an organization building AI-native operations, the compounding rate of a platform matters as much as its current capability. Google Workspace was compounding faster, and the gap was widening.

That is where the divergence began.

Innovation Velocity Matters

Google’s Gemini ecosystem is compounding at a pace that directly impacts operations:

Native AI across Gmail, Docs, Sheets, Drive

Context-aware reasoning across files and time

Rapid iteration cycles tied to real usage patterns

This is not feature rollout.This is infrastructure evolution.

Agentic Workflows Need Fluid Systems

Modern operations demand:

Cross-tool reasoning

Persistent memory

Context-aware execution

Rigid ecosystems create friction here.

What matters is not individual tool capability. What matters is how intelligence flows across systems.

Why Google Workspace: Gemini as the Operational Memory Layer

Truffle's position on Google Workspace has moved beyond productivity. It now behaves like a living intelligence system. Gemini sits at the center of this shift.

What Gemini Actually Unlocks

Gemini operates as a persistent intelligence layer across everything an organization has ever created, stored, or communicated inside Google Workspace. It reads contracts stored years ago, interprets compensation structures inside Sheets, cross-references data across Docs and Drive, and produces computed outputs grounded in actual business records — not approximations.

As of 2025, Gemini in Workspace provides business users with more than two billion AI assists every month (AI Capabilities in Workspace), a figure that reflects genuine operational adoption rather than experimental use. More significantly, Gemini Enterprise now functions as an advanced agentic platform that brings AI to every employee, for every workflow (AI Tool for a Better way to Work) — including the ability to build custom agents without writing a single line of code. For a Chief of Staff, a RevOps leader, or a finance function, this changes the nature of operational decision-making entirely.

This changes the role of knowledge inside a company.

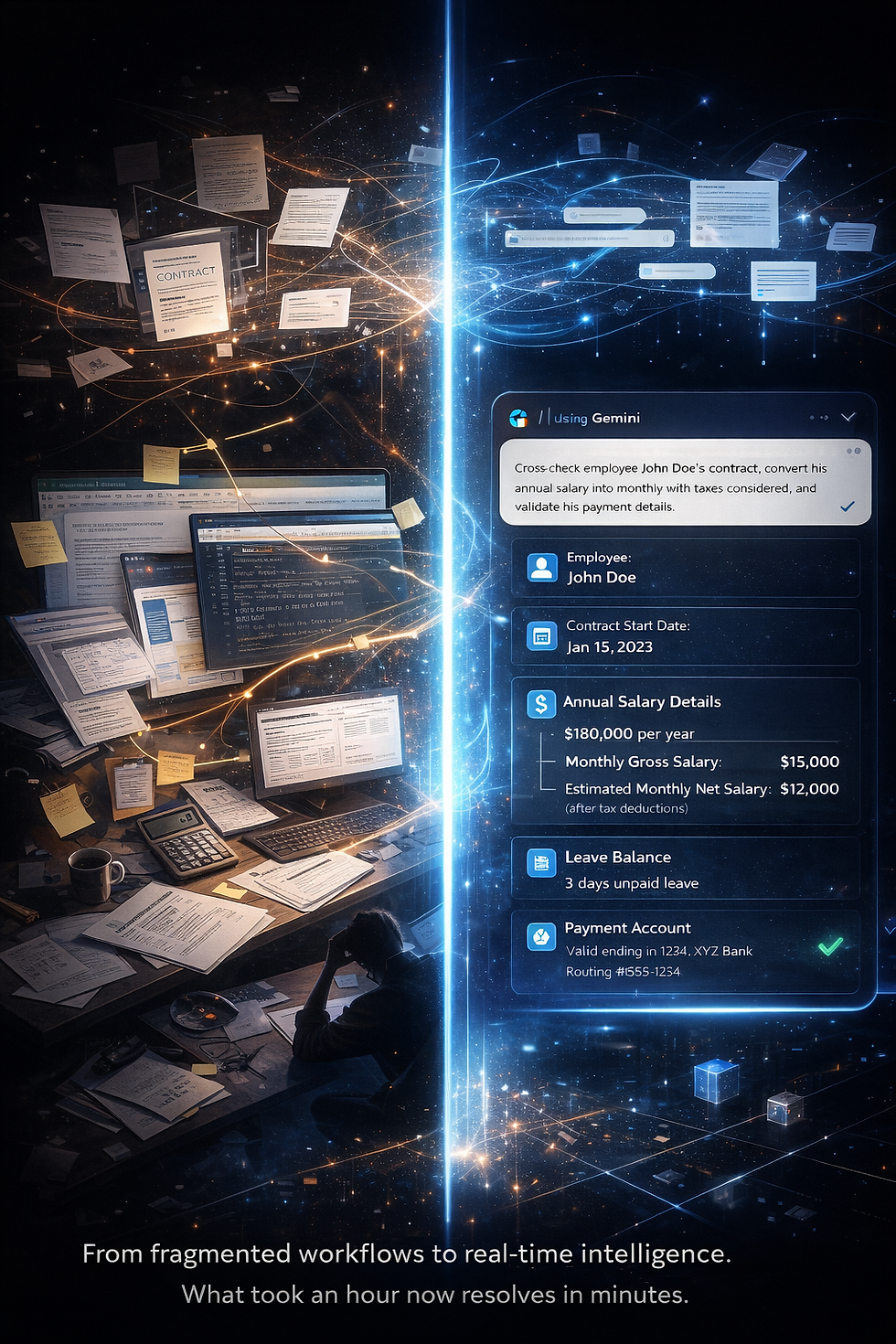

Operational Use Case: How Gemini Reduced a 60-Minute Finance Workflow to Under 3 Minutes

A recurring operational workflow: Validating monthly salary payouts across employees.

Historically, this involved:

Opening contracts

Checking compensation structures

Accounting for unpaid leaves

Converting annual CTC into monthly payouts

Verifying bank details

A manual process.Time-consuming.Error-prone under scale.

With Gemini:

Employee contracts retrieved instantly from Drive

Compensation terms interpreted directly from documents

Leave data cross-referenced with internal records

Annual salary converted to monthly payouts with tax context

Payment details validated across files

Time to completion: minutes.

This is not automation. This is intelligence applied to operations.

For any organisation asking how AI delivers measurable operational value — this is the answer, and it is available today.

What This Means Structurally

Gemini introduces three critical capabilities:

Persistent Memory: Every document becomes part of a queryable system

Contextual Reasoning: Outputs are derived from actual business data

Cross-Document Intelligence: Workflows span across files, time, and formats

This is why Google Workspace becomes:

→ Knowledge Layer→ Computation Layer→ Intelligence Layer

Why Slack: Execution in the Salesforce Ecosystem

If Google Workspace handles knowledge,Slack handles execution. This is where the Microsoft Teams vs Slack decision becomes clear.

Slack’s Evolution Under Salesforce

Slack's evolution under Salesforce has accelerated beyond what most enterprises have registered. In March 2026, Salesforce announced more than 30 new AI capabilities for Slackbot alone, the most significant overhaul of the platform since its acquisition. Slackbot now functions as a full-spectrum enterprise agent: it takes meeting notes across any video provider, executes tasks through third-party tools via Model Context Protocol, operates outside the Slack application on users' desktops, and connects natively to Agentforce — Salesforce's AI agent development platform — routing work requests and operational prompts without human intervention.

AI-enabled applications built for Slack have grown 690% year over year. The platform is no longer a communication tool. It is becoming the single conversational interface through which employees interact with AI agents, enterprise applications, and each other simultaneously.

What Slack Enables

Workflow-Centric Channels

Create channels directly from Salesforce events

Trigger conversations tied to deals, cases, or accounts

Maintain full context within execution threads

AI Agents Inside Channels

Slack is evolving into a host environment for AI agents:

Agents that respond to operational queries

Agents that trigger workflows

Agents that coordinate across teams

Real-Time Execution Layer

Slack bridges intent → action:

Notifications tied to business events

Decision-making in context

Immediate execution loops

The Architecture

This is where the system becomes clear:

“Slack is the execution layer. Salesforce is the system of record. AI agents are the system of action.”

Together:

Salesforce stores truth

Slack activates workflows

AI agents execute decisions

This creates a closed-loop operating system.

The Tradeoff: Integration Requires Intentional Design

Google Workspace and Slack operate as distinct ecosystems, and bringing them together into a coherent operational system requires deliberate architectural work. Data synchronization, workflow orchestration, and identity and permissions management each demand careful design decisions upfront. Organizations that treat this as a plug-and-play transition will encounter friction. Organizations that approach it as an architectural investment will build a system that compounds in value over time.

This requires effort.

Why That’s an Advantage

Composability is the architectural property that separates AI-native organizations from enterprises still optimizing for control. A system designed for adaptability allows individual layers to evolve independently, the intelligence layer can be upgraded without rebuilding the workflow layer, and workflows can be restructured without touching the data foundation. That separation of concerns is precisely what creates long-term operational leverage. Rigid ecosystems trade that flexibility for short-term coherence. Composable systems trade short-term convenience for a compounding structural advantage that widens over time.

This creates long-term leverage.

Introducing Truffle OS

This transition is part of a broader initiative:

Truffle OS

An AI-native operating system for enterprise operations. Built on three layers:

Google Workspace

Knowledge + Computation Layer

Documents

Financial models

Contracts

Institutional memory

Powered by Gemini.

Slack

Execution + Collaboration Layer

Real-time coordination

Workflow orchestration

Agent interaction

Salesforce

Data + Workflow Backbone

Customer data

Revenue processes

Operational workflows

What This Represents

This is not infrastructure modernization. This is operating model transformation.

From:

Static workflows

Fragmented tools

Manual coordination

To:

Intelligent systems

Connected workflows

Agent-assisted execution

Use Cases Across the Stack

A. Immediate Use Cases

Finance Validation Workflows

Salary computation and validation

Expense reconciliation

Payment verification

Contract Intelligence

Extracting clauses from agreements

Identifying obligations and risks

Cross-referencing vendor terms

Cross-Document Querying

Asking questions across multiple files

Generating summaries from distributed data

Automated Reporting

Pulling insights from Sheets

Generating summaries in Docs

Sharing outputs directly in Slack

B. Emerging Use Cases

AI Agents in Slack

Agents handling approval workflows

Agents coordinating internal requests

Agents managing operational queries

Real-Time GTM Coordination

Sales signals triggering Slack workflows

Marketing and sales alignment in real-time

Faster deal cycles through contextual execution

Auto-Created Dashboards

Data pulled from Salesforce

Processed via Gemini

Delivered into Slack channels

C. Ahead-of-Time Use Cases

Autonomous Departments

Finance workflows handled by AI agents

HR operations managed through intelligent systems

Support workflows resolved through AI-first layers

AI-Driven Decision Orchestration

Systems recommending actions

Agents executing decisions

Humans validating edge cases

Cross-System Reasoning Agents

Agents operating across Workspace, Slack, and Salesforce

Real-time context-aware execution

Continuous learning from outcomes

Direct Answers

Why did Truffle move to Google Workspace?

Truffle migrated to Google Workspace because Gemini's AI development velocity had outpaced Microsoft Copilot's in ways that directly affected operational performance. Gemini's ability to reason across documents, compute outputs from historical files, and operate as a persistent intelligence layer across Gmail, Docs, Sheets, and Drive made it the superior foundation for building AI-native operations at the speed Truffle required.

Why Slack over Microsoft Teams?

Slack, under Salesforce, has undergone a fundamental transformation from a communication platform into an agentic operating system. Its native integration with Salesforce, Agentforce AI agents, and the ability to create workflow channels directly tied to CRM data, deals, and cases gives it a structural advantage that Microsoft Teams — designed primarily for communication — does not offer. For an organization anchored in Salesforce, the decision was architectural, not preferential.

What is Truffle OS?

Truffle OS is an AI-native operating model, not a technology stack. It governs how intelligence moves through an organization, how decisions get made, and how execution happens without friction. The tools underneath it — currently Google Workspace, Slack, and Salesforce — are the best available expression of that model today. The principles are permanent. The tools are not.

How does Slack integrate with Salesforce for enterprise AI workflows?

Slack, under Salesforce, allows organisations to create channels directly from Salesforce CRM events — tying conversations to specific deals, cases, or accounts. Agentforce AI agents operate inside those channels, routing work, triggering workflows, and executing decisions without human intervention. This creates a closed-loop system in which Salesforce holds the data, Slack activates it, and AI agents act on it in real time.

The Direction Forward

The future of work is converging on three principles:

Memory becomes intelligence

Communication becomes execution

Systems become agents

Organisations that make the architectural decisions required to support all three of those principles simultaneously, and make them now, will find that the advantage compounds in ways that become increasingly difficult for later movers to close.

This shift positions Truffle in that trajectory. Not as a user of AI tools. But as a builder of AI-native operations.

For Teams Building What’s Next

CIOs, RevOps leaders, and operators are starting to see the same pattern:

AI delivers measurable value only when data is unified, context is accessible at the moment decisions are made, and execution is fast enough to match the speed of the business.

We are actively working with organisations ready to make this shift.

Truffle OS. More to come.

Connect with us:

Email us: hello@trufflecorp.com

Visit our website: https://www.trufflecorp.com/contact-us

Comments